Between 2022 and 2024, nearly 364 zettabytes of data was created, and in 2025, it’s projected to surge another 150%. This explosion has turned data into one of the most valuable business assets. But messy, incomplete, or inconsistent information can grind your operations to a halt, slow down decision-making, and even put compliance at risk.

That’s why data quality engineering has become a necessity. By embedding validation, monitoring, and governance into every stage of the data lifecycle, you can ensure accuracy, consistency, and trust, resulting in smarter business outcomes.

In this article, we will explore what data quality engineering is, why it matters, and the practices, tools, and strategies that help you unlock its full potential.

What is Data Quality Engineering?

Data quality practices apply engineering principles to maintain high-quality data across collection, processing, and usage stages for seamless data management. It ensures that every dataset remains accurate, consistent, and usable at scale.

Think of it as the engineering backbone of data quality management—a system that optimizes operations by minimizing errors, improving data accuracy, and making business insights more reliable.

Key components of data quality engineering include:

- Data validation to verify accuracy before it enters critical systems.

- Data cleansing to remove errors, duplicates, or incomplete records.

- Data transformation to maintain consistency across systems.

- Continuous monitoring to track quality over time.

Together, these elements form the foundation of reliable data quality management.

For example, your retail company integrates sales data from both online and in-store transactions. Without data quality engineering, duplicate orders, missing customer details, or inconsistent product codes could skew your revenue reports.

With built-in validation, cleansing, and monitoring, the company ensures its sales data is trustworthy, enabling accurate forecasting and better inventory management.

Benefits of Data Quality Engineering for Seamless Operations

Organizations that invest in data quality engineering see measurable improvements across operations, compliance, and decision-making. Here’s how:

Improved operational efficiency

High-quality data reduces manual rework, prevents costly errors, and accelerates workflows. With clean, validated data, your teams spend less time correcting mistakes or reconciling discrepancies, which frees them for higher-value tasks.

For example, automated data quality checks can flag inconsistencies in real time, reducing delays in reporting and enabling smoother collaboration across departments.

Better data accuracy

Data quality engineering ensures analytics, reporting, and AI models are powered by reliable information. Accurate data helps you generate reliable predictive analytics, gain deeper customer insights, and run AI models that produce trustworthy recommendations, so you can make smarter, data-driven decisions.

Enhanced data compliance

Clean, consistent, and well-governed data supports regulatory requirements. By embedding quality and governance controls into your data lifecycle, you can automatically track sensitive data and ensure adherence to organizational policies. This reduces the risk of costly fines or compliance issues during audits and regulatory reviews.

Faster, informed decisions

When leaders can trust their data, they act with confidence and speed. High-quality data eliminates uncertainty in reporting and dashboards, allowing executives and managers to make timely decisions.

With reliable data, you can accurately forecast demand and allocate resources efficiently, helping your team respond quickly to market changes and stay ahead of competitors.

In short, data quality engineering creates a foundation of trust that powers operational agility and long-term growth.

How Data Quality Engineering Optimizes Operations

Now that we’ve seen the benefits of data quality engineering, let’s look behind the scenes at the processes that make it so effective.

Data validation and cleansing

Integrated into data pipelines, these processes catch errors, anomalies, and inconsistencies in real time. For example, missing customer information or duplicate transaction records can be flagged automatically and corrected before they enter downstream analytics or reporting systems. This reduces manual intervention and ensures operational workflows run efficiently.

Data integration and transformation

Data quality engineering ensures that information from multiple sources is harmonized while maintaining its integrity. By applying standardized transformations and validations, you can merge disparate datasets—such as sales, inventory, and customer behavior—without introducing inconsistencies.

Automated monitoring

Continuous data quality monitoring tools track metrics, detect data anomalies, and raise alerts proactively. This real-time oversight prevents low-quality data from affecting business intelligence, compliance, or decision-making. Teams can address potential issues quickly, minimizing operational disruptions and maintaining trust in enterprise data.

These practices ensure that data engineering workflows aren’t just moving data; they’re actively safeguarding its reliability, making your operations more resilient, accurate, and ready for business-critical decisions.

Key Practices That Make Data Quality Engineering Effective

To ensure data remains accurate, consistent, and actionable, data quality engineering relies on a set of core practices embedded throughout the data lifecycle. These practices allow you to not only detect and correct errors but also understand, monitor, and govern your data with confidence. They include:

- Data profiling and quality metrics: This practice helps you analyze the characteristics of your datasets and measure quality against defined thresholds. For instance, data profiling can reveal missing values in customer records that, if uncorrected, could impact reporting accuracy.

- Automation in data quality engineering: Automation minimizes human error, speeds up data processing pipelines, and enforces consistent quality standards across systems. Automated validation, cleansing, and monitoring reduce manual intervention, allowing your team to focus on higher-value tasks while ensuring data integrity is maintained at scale.

- Data lineage and tracking: Understanding where data originates, how it is transformed, and where it flows is critical for both trust and compliance. Tracking data lineage enables you to trace errors back to their source, assess the impact of changes, and maintain transparency for audits and regulatory reporting. This practice ensures that stakeholders can rely on the data for analytics, decision-making, and governance.

Together, these key practices form the backbone of effective data quality engineering, providing visibility, control, and confidence across your enterprise data ecosystem.

Tools and Technologies Driving Modern Data Quality Engineering

Modern data quality engineering is primarily driven by three interconnected technology categories: Data Observability platforms, Analytics Engineering tools, and Cloud-Native Data Warehousing. Dedicated data observability solutions, such as Acceldata, Monte Carlo, Dynatrace, Datadog, etc, leverage machine learning (ML) to automatically profile data, detect anomalies, and track metrics like freshness and schema drift without manual rule definition.

Tools like dbt (data build tool) shift quality checks left, enabling data teams to define and execute validation tests directly within the transformation layer using SQL.

Finally, cloud-native platforms (Snowflake, BigQuery, Databricks) provide the necessary scalability and unified compute power for running these complex, automated quality checks across vast, diverse datasets in real time.

When it comes to data quality engineering, the right tools and technologies are what make reliable data at scale possible. These platforms validate, cleanse, monitor, and enforce governance across data pipelines, helping you catch issues early, maintain consistency, and ensure accuracy from collection through consumption.

Acceldata, one of the leading platforms in this space, is designed to bring automation, intelligence, and reliability into data quality management.

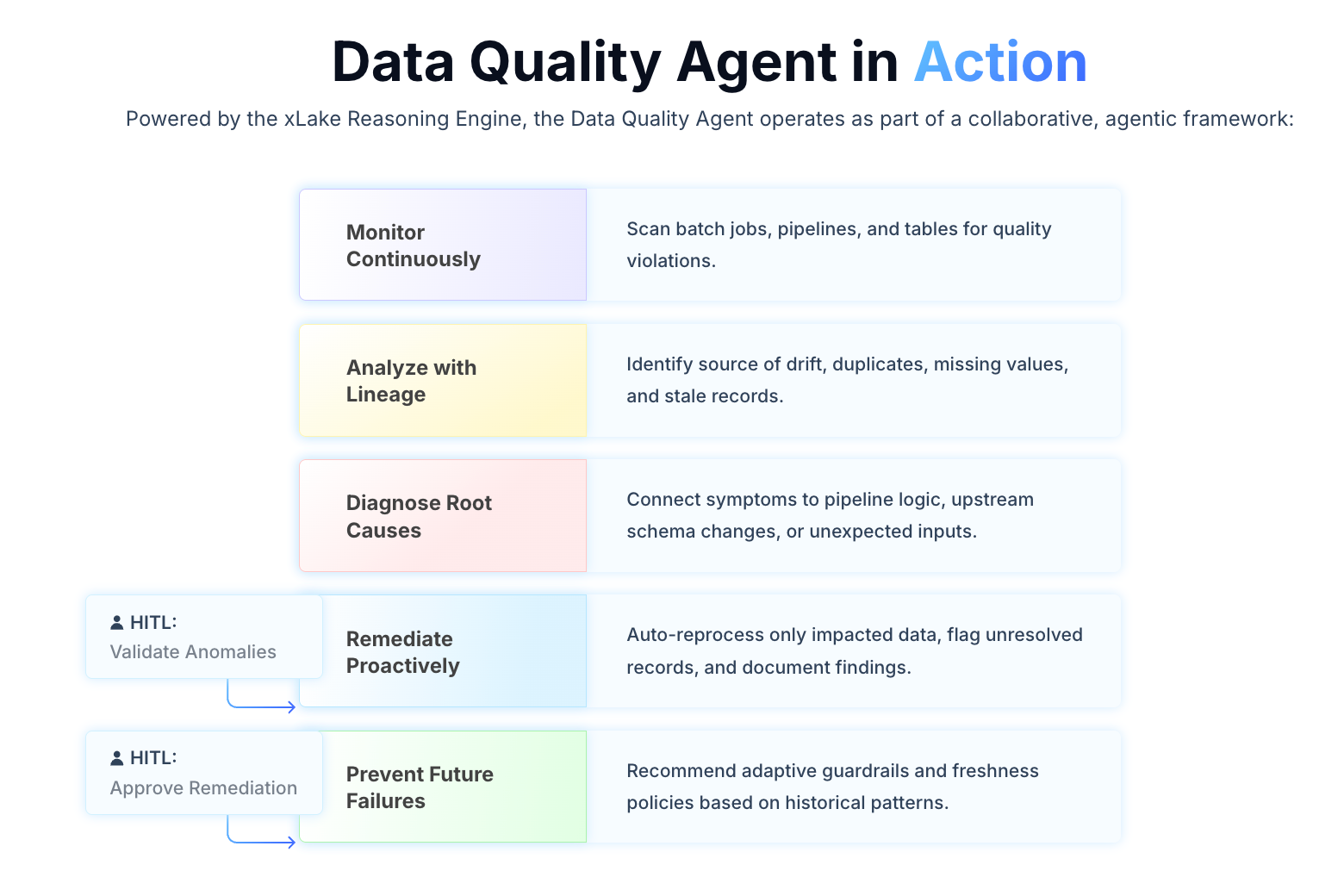

Acceldata’s Data Quality Agent, powered by the xLake Reasoning Engine, goes beyond traditional rule-based checks to provide continuous monitoring, diagnosis, and remediation across pipelines and datasets.

Here’s what makes Acceldata stand out:

- Deep data quality monitoring: Continuously checks batch jobs, pipelines, and tables for issues such as drift, duplicates, missing values, and stale records.

- Root cause analysis and remediation: Diagnoses problems caused by schema changes or unexpected inputs and either auto-reprocesses only what’s impacted or flags records for human review.

- Human-in-the-loop feedback: Lets users validate anomalies, override or approve remediation steps, and retrain detection logic, reducing false positives and ensuring quality controls match business context.

- Clear visibility and reporting: Provides data quality scores, highlights datasets with low completeness, traces when quality declines began, and generates reports for executives.

A global leader in commercial data and analytics, managing over 600 million business records across 250 markets, faced major challenges with slow and siloed data quality processes. Running 200+ validation rules across 500 billion rows of data often took weeks, delaying insights and creating operational inefficiencies.

By adopting Acceldata’s observability platform, the company cut processing time from 22 days to just 7 hours. Non-technical teams could build and deploy custom rules in under a day (down from a month), while reusable rules were scaled across 30,000 sources in 220+ countries.

The result: faster insights, reduced operational costs, and stronger trust in data delivered to customers worldwide.

Best Practices to Implement Data Quality Engineering Successfully

To make the most of your investment, you should follow these best practices that ensure consistency, scalability, and alignment with business goals:

- Set clear data quality standards: Define measurable thresholds for accuracy, completeness, timeliness, and consistency. Standards act as guardrails, helping teams evaluate whether data is fit for use across analytics, reporting, or compliance.

- Collaborate across teams: Data engineers, scientists, and business stakeholders must work together to shape rules and processes. Cross-functional input ensures that data quality initiatives not only meet technical requirements but also drive real business outcomes.

- Continuously refine your data processes: As your data ecosystem grows, update validation rules and monitoring workflows to keep data accurate and systems reliable. Regular improvements help you stay ahead of emerging issues and maintain trust in your data.

When these practices are embedded, data quality engineering becomes a sustainable driver of operational excellence.

Driving Operational Efficiency with Data Quality Engineering

High-quality data is your foundation for operational efficiency, compliance, and smarter decision-making. Data quality engineering helps you keep your data accurate, consistent, and reliable by embedding validation, cleansing, monitoring, and lineage tracking throughout its lifecycle.

By following best practices such as setting clear standards, collaborating across teams, and continuously refining processes, you can prevent errors, reduce manual work, and speed up workflows. Acceldata takes this further with automated monitoring, root cause analysis, and human-in-the-loop validation, so you get faster insights and stronger trust in your enterprise data.

When you implement data quality engineering effectively, raw data becomes a strategic asset that powers smarter decisions, boosts agility, and drives sustainable business growth.

Ready to unlock the potential of data quality engineering? Contact Acceldata to learn how our data quality engineering solutions can help optimize your data management processes, ensuring seamless operations and better decision-making. Request a demo today.

FAQs About Data Quality Engineering

1. What is data quality engineering, and why is it important for businesses?

Data quality engineering applies engineering principles to ensure accuracy, consistency, and usability of enterprise data. It is vital for businesses because reliable data supports compliance, decision-making, and operational efficiency.

2. How can data quality engineering improve operational efficiency?

By automating validation, cleansing, and monitoring, data quality engineering reduces manual rework and errors. This streamlines workflows and accelerates decision-making.

3. What are the key components of data quality engineering?

Core components include data validation, cleansing, transformation, continuous monitoring, and lineage tracking. Together, they ensure trustworthy and consistent data across systems.

4. How do data validation and cleansing work in data quality engineering?

Data validation tools check for accuracy before data enters critical systems. Data cleansing fixes errors, removes duplicates, and fills gaps to maintain data integrity.

5. What tools can be used for data quality engineering and monitoring?

Platforms like Acceldata, Talend, and Informatica automate validation, cleansing, and monitoring, integrating with pipelines to provide continuous oversight. What sets Acceldata apart is its Agentic Data Management, which combines automated monitoring with intelligent root cause analysis and human-in-the-loop validation. This helps you identify and resolve issues faster, while gaining a complete view of data lineage and quality across your ecosystem.

.png)

.webp)

.webp)