Enterprises in every industry and sector rely on data to support and sustain growth. It is crucial that the data being used by companies and businesses should be error-free and clean to ensure that business decision-makers are operating with the highest quality information. Any data irregularity or error in the data pipeline can lead to heavy financial losses. This is where Data Observability comes into play.

Data observability is the practice of understanding and maintaining visibility into the data that flows through a company's technology infrastructure. In this blog post, we will explore the importance of data observability platform and how it can help organizations make better decisions, collaborate more effectively, and achieve greater organizational agility.

Issues Associated with Modern Data Infrastructure

Before diving deeper into Data Observability Platform, it is important to have a look at the complexities associated with operating modern data infrastructures in order to understand Data Observability and its importance better.

With increasing functionality in data systems, and a continuously evolving array of new data sources available to data teams, it becomes increasingly challenging to ensure that it is working flawlessly, leading to different issues, some of which are mentioned below.

More and More Use of External Data Sources

The dependency on data for business operations is increasing every day. Companies are collecting data at a rapid volume and velocity from a wide range of data sources, including public government data sites, social media channels, and published sources. Often these data assets are flawed when they enter the environment or are not adequately maintained or monitored. When not identified and corrected, they cause issues downstream.

Complex Transformations

While ingesting data from hundreds of sources, companies need to collect, transform, and structure all the data in the appropriate format to make it usable. With the increasing demand for data collection, storage, and usage, it is incumbent upon data teams to apply solutions that treat data with diligence, and a scalable Data Observability platform is a comprehensive solution for it.

Using One-Size-Fits All Data Pipelines

The growing use of complex data ingestion pipelines for massive data volumes has increased complexity across the industry. Modern-day tools have automated data ingestion and have become an integral part of the modern data stack. Their goal is to reduce the overall amount of time it takes to make data usable for the end users.

The demand for compliant access to high-quality data increases the need for data observability tools to get tracking, visibility, and control over what happens with the ingested data. Observability has proved to be an effective way for data quality management as it creates healthier pipelines.

What is a Data Observability Platform?

.webp)

Monitoring and observing your organization’s data becomes challenging when things start picking up. Data observability tools and solutions are critical to staying up-to-date with data and keeping all data team members on the same page.

Manually tracking and observing data is time-consuming, arduous, and sometimes inaccurate. Because of the potential for mistakes, manually practicing data observability isn’t ideal for data teams looking to interpret their metrics better. However, other solutions to data observability can make working for a data team much more manageable. Finding expert observability tools is essential to improving your data pipeline reliability and optimizing your data performance. By doing this, data teams can easily collect critical metrics to understand data performance.

Therefore, teams must consider a data observability platform to address their immediate needs for analyzing data metrics. A multidimensional data observability platform like Acceldata is exceptional because it offers data teams advanced features to find insight into the performance, reliability, and cost of data at scale.

A data pipeline observability platform is one of the most valuable tools a data engineering team can invest in because of the accuracy of this type of software. Acceldata has features to ensure data reliability and efficiently predict, prevent, and resolve pressing data quality issues. The combination of Acceldata’s data reliability and observability tools helps create a data environment that is highly protected and advanced.

Acceldata offers comprehensive data pipeline observability tools. These tools allow data teams to track the journey from origin to consumption, increase pipeline efficiency and reliability, and align business and data strategies. Furthermore, Acceldata provides data teams access to the Data Observability Gartner Report.

This report helps data teams determine how to best adapt data observability platforms to modernize their data management. Acceldata has all of the best quality data observability tools while offering users access to crucial information regarding data observability and metrics.

Components of Data Observability

Data observability tools help to evaluate various issues related to data reliability and quality. Collectively, these issues are considered primary pillars of data observability. Each component offers valuable insights that help enterprises to gain a holistic view of data pipelines and health when consistently monitored.

- Freshness: Data needs to be up-to-date to be useful. Observability helps ensure that data is updated in real-time.

- Distribution: Data is often distributed across multiple systems. Observability helps ensure that data is consistent across these systems.

- Volume: More data means more complexity. Observability helps manage the complexity of large data sets.

- Schema: Data needs to be structured to be useful. Observability helps ensure that data is structured consistently.

- Lineage: Data has a history that is important for understanding its value. Observability helps track the lineage of data over time.

Primary Features of a Data Observability Platform

Observability is critical in data management because it provides insights into the health and state of data in a system. This knowledge can help businesses make informed decisions, collaborate more effectively, and achieve greater organizational agility. With a reliable and scalable data observability platform, it becomes easier to identify and fix issues, which can help businesses avoid costly mistakes and downtime.

Data Observability helps to improve the overall DataOps processes by increasing the completeness, usefulness, and quality of data. It enhances trust in data so that businesses can make better data-driven decisions. Here are some of the primary features of a Data Observability platform:

Monitoring

A Data Observability platform monitors the data pipeline and provides an operational view. Moreover, it also monitors the data at rest without extracting it from where it is stored. This makes the data observability tools scalable and cost-efficient.

Warning

Data Observability platforms provide alerts for both expected and unexpected events, which helps to avoid data-related issues. Observability offers comprehensive information about data assets so that changes can be made and issues can be fixed proactively.

Tracking

Observability platforms have the ability to set and track particular data events. Moreover, it analyzes the primary invariants, resources, and dependencies which helps to get enhanced data observability.

Analysis

It includes automated error detection and analysis that adapts adequately to enterprises’ pipelines and data health. Moreover, once you have stored the data, these platforms can help you identify trends, issues, and patterns that may not be apparent from the raw data.

Recording

Data observability platforms record the events in a standard format. It helps to get insights into your data stack to improve data reliability, spending efficiency, and platform performance. Based on the records and analysis, you can figure out action plans to optimize your data systems.

Open Source Data Observability

Data observability open source technologies are valuable for teams looking to optimize their data metrics. If you seek a high-quality data observability platform, you will likely want to seek open-source vendors. An open-source data platform is crucial because your entire data team can access critical metrics about data performance.

When looking into different data observability vendors, it is vital to consider the features offered in the data software. Data teams should seek open-source software that can swiftly detect any data performance issues and tackle them before they cause damage.

A data observability framework is commonly used as a reference for data teams venturing into data observability platforms. A data observability framework can help data teams identify and address any issues in their data performance. For instance, the framework would include features to detect issues, alert the data team, find and analyze the root cause, and remediate the issue. The open source software used by your business’s data team should cover the core data observability pillars to ensure accurate metrics and the best possible experience.

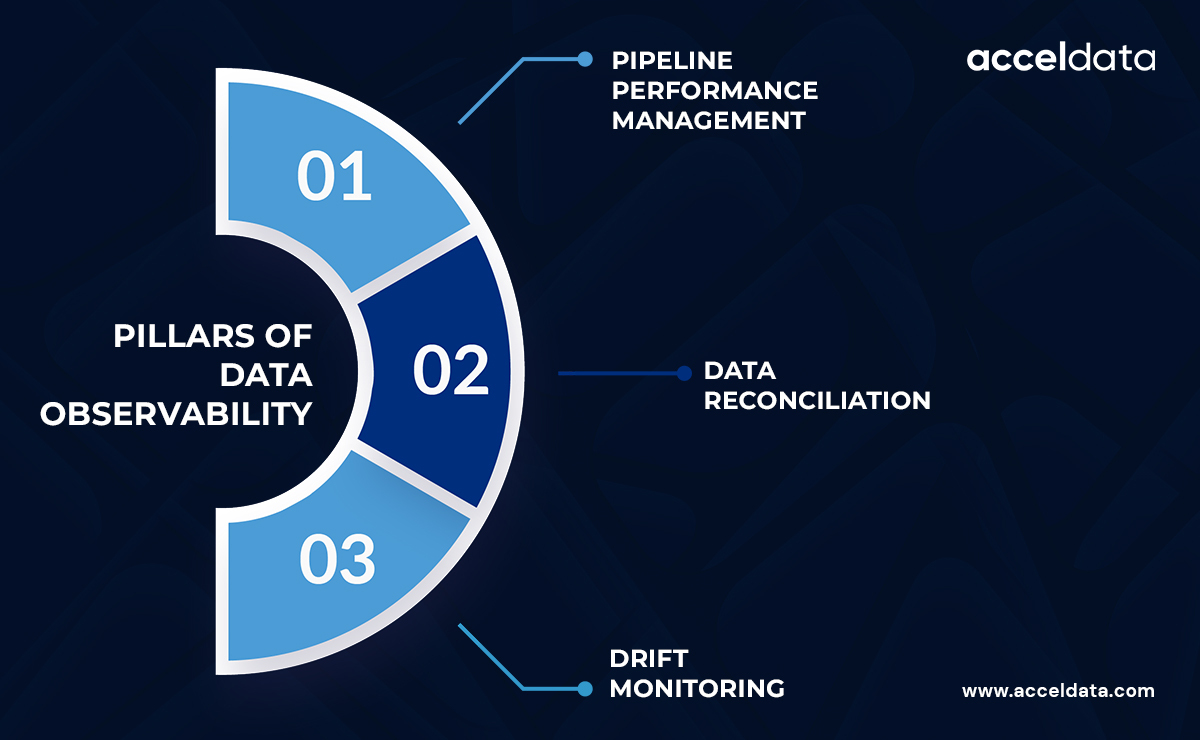

Before selecting observability software, data teams will benefit from learning the three pillars of data reliability/observability. The three data observability pillars to measure are:

- Pipeline performance management

- Data reconciliation

- Drift monitoring

Data Observability Tools from Gartner

Access to tools that double as Gartner application performance monitoring software is crucial for data teams to understand insights and trends in data metrics. Gartner Research’s most recent Data Observability report found that data is now essential for the continued support of existing and modern data management architectures.

The research that Gartner publishes is essential for understanding and using data observability tools. Gartner provides data teams with proper research to consistently optimize data performance metrics. According to the Gartner Observability definition, data observability is explained as essential data software that allows data teams to collect key metrics about their data performance to gather insights on their data performance and future directions.

Data teams may wish to seek out application performance monitoring. Gartner Magic Quadrant reports are one of the ways that you can determine which application is best for all of your team’s observability needs.

APM tools that Gartner Research discusses in the Gartner Observability Magic Quadrant report include features that observe data users, infrastructure, and pipelines, along with general data not covered in these categories. Software like Acceldata offers data teams crucial APM tools for consistent data monitoring without risking any blind spots that go undetected in manual data monitoring.

Final Words

Ultimately, having data software and tools that offer insightful observability metrics is crucial to improving your data performance. Working on a data team is challenging if you use manual processes and do not know how to implement observability without manual processes. The confusion over the data observability definition and the fundamental importance of monitoring data observability metrics often keeps teams from pursuing companies and software in the data observability market.

To truly understand the data observability medium, your data team must strengthen your knowledge of the different data observability principles that are followed by the best data teams. First, learn about the difference between observability vs. telemetry in data metrics.

Though telemetry is a part of monitoring your data solutions and recording the information found in your data metrics, observability software is crucial because it interprets these metrics and offers valuable options to understand or resolve any issues in your data. Starting off your data journey with an observability framework is crucial.

However, after solidifying your data framework, you must begin using software aimed at helping data teams understand and analyze data metrics. Data teams need a complete overview of their data operations, or DataOps, observability metrics. Software like Acceldata allows viewing your data operations in one platform that provides concrete insights into your business’s data performance.

.svg)

.png)

.webp)

.webp)