The traditional data catalog is, much like the traditional data warehouse, being phased out.

Both have their uses, but they are legacy technologies, and while they still have roles in the modern data stack, but those roles are limited.

A new data approach, data discovery, is replacing the traditional data catalog. Data discovery brings must-have capabilities for any business interested in gaining true visibility and control over their data pipelines and distributed data infrastructure.

What is a Data Catalog?

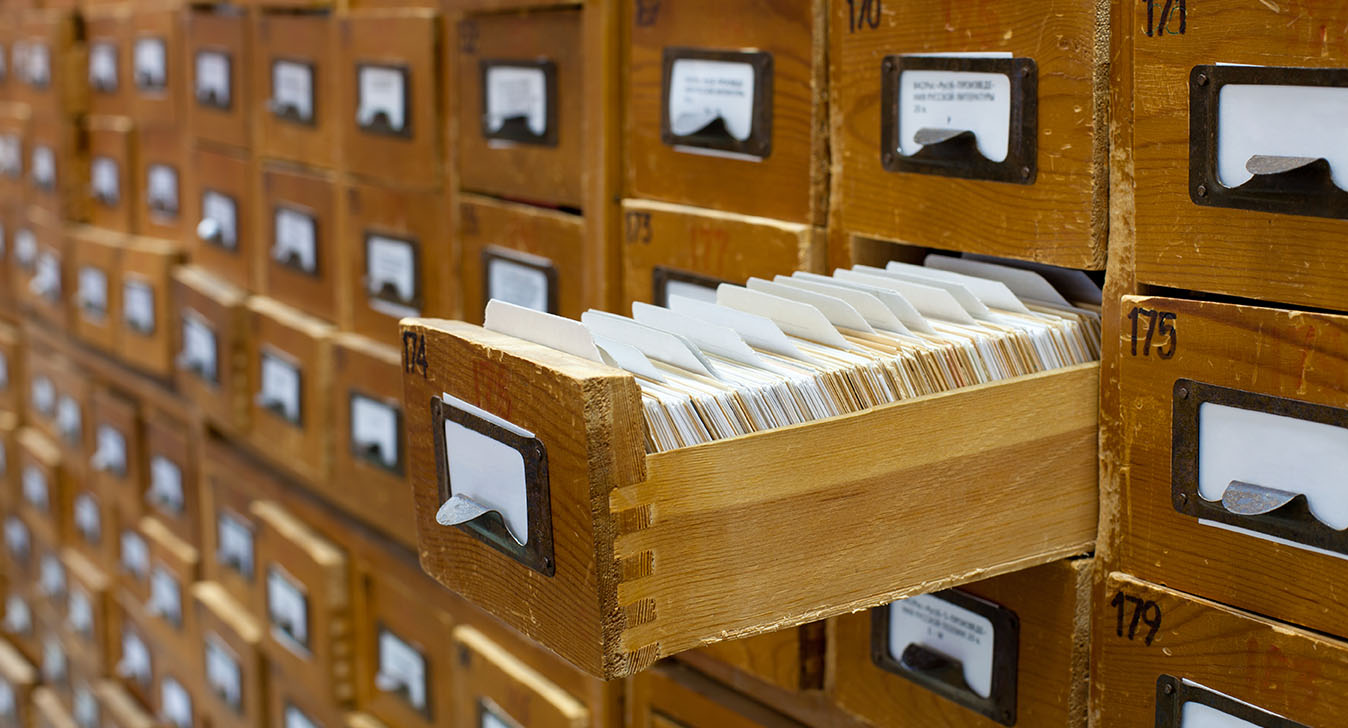

A traditional data catalog is a lot like a classic library card catalog below. It’s an inventory of all of your company’s data assets and it uses metadata to describe those assets, such as where they are stored, what format they are in, their age, who has used or updated them (i.e. their lineage), their relevance and usefulness to different lines of businesses, their privacy and compliance status, etc.

Most of that metadata is painstakingly entered by hand by IT administrators or data professionals, though some data catalogs do automate it to a limited extent.

Many companies started adopting data catalogs in the 2000s to get a handle on their enterprise content management (ECM), master data management (MDM), and, of course, data warehouse deployments.

In fact, data catalogs (and even data dictionaries) go back decades earlier, to an era when relational databases reigned alone. Turns out even with highly-structured tables and rows of data, there is value for database administrators to get quick snapshots of their database without having to formally query it.

Three Reasons Data Catalogs Don't Work

Traditional data catalogs definitely got the job done for many years. They’ve helped countless employees find the right data without having to pester their overworked IT person. Not only are traditional data catalogs still in widespread use, they are still being built today.

However, several things have changed in the past few years. As is common with older technologies, traditional data catalogs have failed to keep up.

First, there’s been an absolute flood of new data inside enterprises. Not only is there a much higher volume of data, but it’s coming from many more sources — social media, IoT sensors, real-time streams, new applications, containers, and a host of other channels. And rather than structured tables and rows, all of this new unstructured and semi-structured data is being stored in a diversity of raw formats, everything from Excel files to multimedia recordings, usually in lightly-managed, catch-all cloud data lakes.

Second, the growth on the supply side of data has been matched by the growth in demand among users. Managers now check real-time dashboards before making important decisions, rather than wait for weekly or monthly reports from their BI analysts. Increasingly, key decisions are being made autonomically by applications connected to analytics data feeds.

Whether the decision maker turns out to be human or machine, the growth in operational analytics also brought an explosion in data pipelines connecting data sources with analytics apps and dashboards. And the data flowing through each and every data pipeline ends up transformed or changed by the time it reaches its destination.

The problem? Traditional data cataloging only happens once, with metadata generated and recorded at the time data is ingested into the repository (database, data warehouse, or data lake). In modern data infrastructures, however, data changes constantly. For instance, a dataset of event streamed data can gain or lose quality over time. A dataset also potentially changes every time it is aggregated, combined or loaded into an application or travels through a data pipeline. Neither traditional data catalogs nor the people managing them can keep up and update the metadata every time your data changes.

And that brings us to the third key flaw of traditional data catalogs — their reliance on manual effort. Simply put, traditional data catalogs weren’t built to scale with today’s exponential data growth. It would be like expecting librarians used to manually cataloging books with the Dewey Decimal System to suddenly catalog the entirety of the Web, too.

Even if companies can devote more employees to manually updating their data catalogs, should they be? Because there are many more valuable projects to the business than futilely trying to keep their data catalogs updated.

Four Reasons Data Discovery is Better Than Data Catalogs

Data catalogs need to be replaced or, at the very least, have their infrastructure overhauled.

That’s where data discovery comes in.

Data discovery re-invents the process of gathering and updating the metadata for today’s data infrastructures. In particular, data discovery does four things better:

- It automates the process of harvesting attributes about your data assets, minimizing human labor;

- It can discover data wherever it is located in your distributed infrastructure;

- It constantly keeps metadata updated in real time for both data at rest and also data in motion (since data constantly transforms as it travels through your data pipelines);

- It can assign easy-to-understand data health and quality scores that align with the needs of different users in your organization. In other words, providing more relevant results for their data searches.

The shortcomings of current data catalogs and data dictionaries force data engineering and IT teams to devote too much time not just to manually updating metadata, but also helping data scientists find the right dataset and verify its actual lineage, quality and compliance status.

Imagine what happens as more self-service analysts and citizen data scientists in your business start clamoring for help? The only way to enable data democracy and jumpstart the spread of embedded analytics in your organization is with a data discovery solution.

The difference between traditional data cataloging and data discovery reminds me of the difference between Web directories and search engines. When Yahoo! released its first browsable directory of the entire World-Wide Web back in 1994, there were only 2,738 websites in total. No wonder Yahoo’s founders could create the entire index by hand in their spare time as graduate students at Stanford University.

For several years, Yahoo continued to rely on human teams for manual categorization, augmented by a web crawler. But as the Web exploded in size, search engines such as Google started emerging with completely-automated and more comprehensive indexes, more relevant search results for individual users, and a better user experience overall. More than two decades on, search engines remain supreme.

Acceldata Data Observability is Your Data Discovery Solution

The Acceldata Data Observability platform is a powerful data discovery capabilities for the modern data stack. It constantly scans, profiles and tags your data throughout its lifecycle, not just when it is imported. Using machine learning, the Data Observability platform automates the process of classifying and validating data whether it is at rest or in motion, minimizing the manual work for your DataOps and IT teams.

Acceldata enables your users to make simple faceted searches as well as browse data assets that are similar in format or content. The searches and browsing are all managed by role-based access control, meaning users are prevented from viewing documents that they lack the rights to view.

.webp)

.webp)